A few days ago, the Norwegian Magnus Carlsen has re-validated his title as world champion chess against Sergey Karjakin: after 12 conventional items had to be held for an extension to items semi-fast, and it was in that duel in which finally Carlsen managed to win against his rival.

These great teachers are among the best in the world, but neither they nor the former masters of the whole story could do much in that other world championship called Top Chess Engine Championship (TCEC) in humans even competing. It is reserved for machines, or rather, to chess engines that compete with each other and that would leave very bad place to any human player.

We are not rivals for the machines, so … that compete between them

The development of machines that were capable of playing chess has spent decades running, but the highlight of that effort came in 1997 when Deep Blue beat the then world champion, Garry Kasparov, in a ‘match’ six games.

At that time it was already clear that the computing power would end up being too much for the best in the world – several great masters had already fallen long before Kasparov did – but after that defeat was assumed that in terms of chess engines that exceeded players Human work was already done. What challenge then remained in computerized chess?

The answer was clear: develop the best world chess engine. One that could win everyone else.

In that race several developments already embarked they did not even need supercomputers to give its full potential. We have a good example in the legendary Fritz, the program published by ChessBase that in 1995 already won the World Computer Chess Championship beating (what irony) the prototype of what would be Deep Blue.

That program would end up being somewhat behind as the years went by, and today there are many particularly striking contenders in a segment where any small improvement can be a definite advantage. Today different versions of Komodo, stockfish and Houdini dominate those qualifications, although contenders Fire, Jony, Gull, Rybka or Andscacs made it to the third stage before the Superfinal with certain options in the last TCEC which will be discussed later.

Stay with an idea: Carlsen probably lose one ‘match’ with any of them. And I might have done it long. As have Kasparov, Fisher, Capablanca and any other human player in the history of a sport that machines have dominated by pure calculation power.

You may also like to read another article on iMindSoft: DeepMind is already learning to play with physical objects … Yes, as a small child!

The ELO system puts us on our site (or not?)

However, the race for the world’s most powerful chess engine would soon become frantic. Both which were different championships and rating systems ELO “adapted” and granted by all kinds of organisms. These associations have been in charge of offering their estimated ELO assessments to these computer programs, and here logically everyone was trying to achieve the highest possible score.

Eye, because as we say these estimated ELO scores are not exactly comparable to the ELO scores of human players. Magnus Carlsen currently has 2,840 ELO points according to FIDE, but that does not necessarily mean that a chess engine with 2,840 points would be “tied” to the great Norwegian master.

The mess is so huge that there are over a dozen lists different scores among which the LLCA, created in 2005 or IPON, more modern (2009) and taking into account different factors (time controls, hardware used, Speculative analysis, use of opening books, etc.). In all of them, not only the estimated ELO scores are given, but also the margins of error that are presented to estimate those scores.

One of the oldest and most reputable ratings is that of the SSDF (Swedish Chess Computer Association), according to which the most powerful chess engine in the world is Komodo 9.1 with a score of 3366 points. We have to go to the 38th position in this list to find Pro Deo 2.0, the first one below Carlsen’s 2,840 points. This rating is not updated too long, and both LLCA as CEGT usually the most accepted, though here there are different opinions of those who follow this area.

Hello, I am a machine and I am world champion TCEC

The competitiveness to determine precisely which is the best chess engine in the world not only gives rise to several lists that claim to be the most coherent and most “official”. It is exactly the same with the championships trying to figure out what program is the best at all times.

These tournaments are actually almost testimonial and used as a marketing tool, because the grades are updated continuously are becoming a much more effective count of those chess programs. Some tournaments also allow for any hardware combinations (up to a limit, no supercomputers or clusters), and there are a limited number of games against the rivals that makes the final result can be very different if the same cycle of matches Just a few days later.

Yet it is as we said clearly that there is a clear interest of commercial enterprises to achieve those, including two prominent clear titles: the Top Chess Engine Championship (TCEC) and the World Computer Chess Championship, something less relevant by the requirement that there A “physical” presence of the machines (or rather, of those responsible) when the championship is celebrated.

The TCEC has been celebrated since 2010, and the current format is very different from the one governing the FIDE World Championship. In the case of TCEC there are a number of “stages” in the same year, each of which lasts several weeks and games played and broadcast live online.

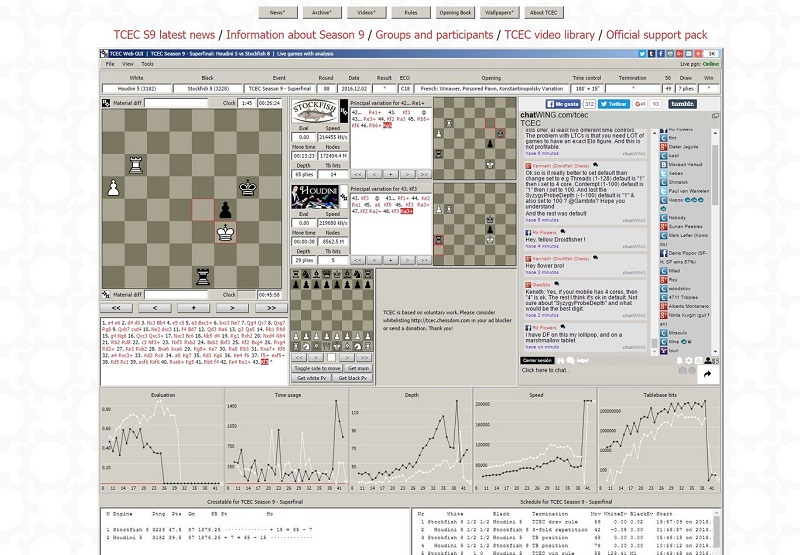

As explained in Chess Programming Wiki, the current issue (‘Season 9’) began to be held on May 1 and will end this December. There are 32 motors competing for a championship that includes commercial programs but also independent programs. Each engine will end up playing 992 games in this divided between the three stages or ‘stages’ and the current Superfinal, consisting of 100 items edition. These engines play constantly, 24 hours a day.

In all current items they are used time controls that vary, ranging from 120 + 15 (120 minutes for each machine and 15 seconds added per move), to 180 + 15 of the Superfinal, and there are limitations in both The topics such as the speculative analysis as in the books of openings, that can be used until the first 2 to 8 movements according to the game phase, less in the superfinal, in which a book of openings “variable” is used.

To determine ‘seeded’ is an Elo rating weighted using other lists scores as those discussed, but as the championship each program progresses is winning or losing points to achieve an ELO score “internal” within that particular tournament.

Another key element of this tournament is that the hardware is the same for all competitors. In the current Superfinal you are using a configuration with a motherboard Supermicro X10DRL-i in which 2 deca-core Intel Xeon processors E5 2630v4 integrated 2.4 GHz, 128 GB of RAM and a 240 GB SSD. The operating system is Windows Server 2012 R2

The current championship continues to be celebrated and is in its final phase already, the ninth, which in the superfinal is facing Houdini 5 (internal ELO of 3,182 points) and Stockfish 8 (internal ELO of 3,228 points). The victory seems already determined: 88 Stockfish heading wins by 47.5 to 39.5 points, and is very unlikely to achieve Houdini regain lost ground: only remaining six games with white and would have to win two of them, something really difficult. With blacks and at this level, experts say, winning with black pieces is extraordinarily rare.

So, it looks like we have next TCEC world champion. And attention, because there is something especially noticeable in Stockfish: is an Open Source engine that anyone can download both to play and to analyze your code (on GitHub), while Houdini is a commercial development whose Standard license (up to 6 cores and 4 GB memory hash).